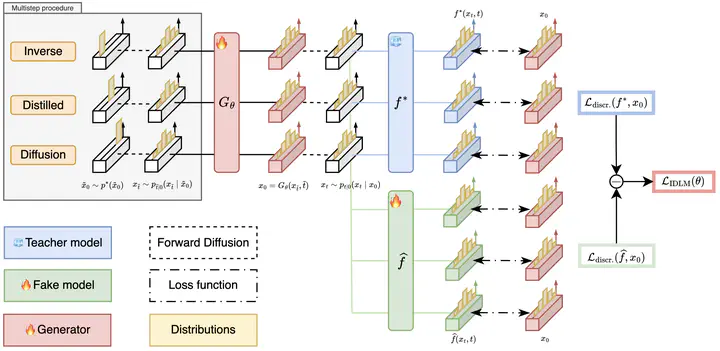

Overview of standard Diffusion Language Models and our IDLM approach, which keeps strong generation quality while making inference much faster with only a few steps.

Overview of standard Diffusion Language Models and our IDLM approach, which keeps strong generation quality while making inference much faster with only a few steps.Abstract

Diffusion Language Models (DLMs) have recently achieved strong results in text generation. However, their multi-step sampling leads to slow inference, limiting practical use. To address this, we extend Inverse Distillation, a technique originally developed to accelerate continuous diffusion models, to the discrete setting. Nonetheless, this extension introduces both theoretical and practical challenges. From a theoretical perspective, the inverse distillation objective lacks uniqueness guarantees, which may lead to suboptimal solutions. From a practical standpoint, backpropagation in the discrete space is non-trivial and often unstable. To overcome these challenges, we first provide a theoretical result demonstrating that our inverse formulation admits a unique solution, thereby ensuring valid optimization. We then introduce gradient-stable relaxations to support effective training. As a result, experiments on multiple DLMs show that our method, Inverse-distilled Diffusion Language Models (IDLM), reduces the number of inference steps by 4x-64x, while preserving the teacher model’s entropy and generative perplexity.